When tuning your application for performance, you may discover that it is the garbage collector that slows things down. For instance, garbage collector may occupy 50% of the CPU, which means that your program spends rather little of its time doing things you want it to do and a lot of its time cleaning up garbage. Then - you should diagnose problems and optimize. However, you can almost always speed up your application by optimizing garbage collection.

Note: I'm running Scala 2.8 on Windows 7 with a 32-bit JRE version 1.6.0_17.

Note: This article applies to any JVM language, not only Scala. By the end of the article I will present a Scala sample though.

Accessing garbage collector performance data

First, we need to collect GC data.

Before you run your app, you should set a certain Java option that will provide you with garbage collection information. How you set it is up to you - I will show how to set it via command line in Windows:

set JAVA_OPTS=-verbosegc

-verbose:gc should also be valid.

Now, when you run your application:

scala me.m1key.approximation.Launcher

... JVM will print something similar to output:

0.074: [GC 886K->173K(5056K), 0.0015737 secs] 0.100: [GC 1066K->128K(5056K), 0.0007356 secs] 0.124: [GC 1009K->183K(5056K), 0.0006837 secs] 0.154: [GC 1079K->130K(5056K), 0.0008542 secs] 0.181: [GC 1021K->148K(5056K), 0.0006898 secs] 0.210: [GC 1039K->241K(5056K), 0.0009012 secs]

You should run your Scala application from command line to see it. Of course, the result will vary depending on the program you run and it's always a bit different for each run - plus different JVM versions may have different formats of this data.

Now, before we analyze this, I would like to say that you might want to have this printed to a file instead of standard output (you probably do). In that case, this option will do it:

set JAVA_OPTS=-Xloggc:gc.log

And should you need more data, you can use -XX:+PrintGCDetails.

set JAVA_OPTS=-XX:+PrintGCDetails -Xloggc:gc.log

And you will also get this information:

Heap def new generation total 960K, used 117K [0x04180000, 0x04280000, 0x04660000) eden space 896K, 6% used [0x04180000, 0x0418fa98, 0x04260000) from space 64K, 86% used [0x04260000, 0x0426dcb0, 0x04270000) to space 64K, 0% used [0x04270000, 0x04270000, 0x04280000) tenured generation total 4096K, used 324K [0x04660000, 0x04a60000, 0x08180000) the space 4096K, 7% used [0x04660000, 0x046b1118, 0x046b1200, 0x04a60000) compacting perm gen total 12288K, used 7394K [0x08180000, 0x08d80000, 0x0c180000) the space 12288K, 60% used [0x08180000, 0x088b8908, 0x088b8a00, 0x08d80000) No shared spaces configured.

Here you can see each generation and how much space it used.

Analyzing GC data

The three most interesting values are throughput, pauses and footprint.

- throughput - the time spent not garbage collecting, i.e. your program doing useful things

- pause - the time spent garbage collecting

- footprint - the amount of memory your program took

Let's see.

0.074: [GC 886K->173K(5056K), 0.0015737 secs]

- 0.074 - how long the program lived at that point, in seconds

- GC - indicates it is a minor collection (Full GC means major collection)

- 886K - the combined size of live objects before garbage collection

- 173K - the combined size of live objects after garbage collection

- 5056K - the footprint, i.e. total available space, excluding permanent objects

- 0.0015737 - indicates how long this garbage collection took

What is the difference between minor and major collections? Well, major collections are slower as they check all objects if they should be garbage collected; minor just check the young objects.

OK, you can see the footprint here, but that's basically it - throughput and pause you'd have to calculate yourself which is a tedious task. It's quite easy to write a program to analyze those values though. There is a Perl script included in this rather lengthy article on improving Java application performance by Nagendra Nagarajayya and J. Steven Mayer. But there is also a freeware GUI tool you might use.

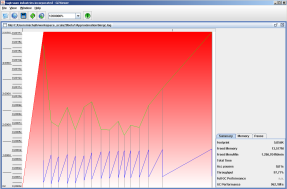

It is called GC Viewer and you can obtain it from the tagtraum.com website. This tool feeds on the log file that we generated previously. Below you can see a screenshot from this application.

Note: When I used -XX:+PrintGCDetails, GC Viewer had some problems.

Speeding up your application

Once you have discovered that it is indeed the garbage collector your JVM runs that slows down your application, you should optimize. More, I think it's almost always worthwhile to see if you can speed up your program. But how?

You must realize that there are different kinds of garbage collectors (which and how many depends on your JVM version). You may decide that another garbage collector would be better for your program. Sun Docs provide a description of collectors and information on choosing them.

Obviously, there is a lot of parameters you can set to, e.g. set throughput ratio goal for your application (-XX:GCTimeRatio=<ratio>), maximum pause goal (-XX:MaxGCPauseMillis=<ms>) etc. You can find a list of those parameters on the tagtraum.com page. You might want to consider tuning those parameters.

You should take a separate look at your generations - perhaps the problem lies there and you can fix it by resizing generation sizes (for instance, the bigger the young generation, the less often minor collections happen - but the more often major collections will).

Update 2010-04-02: The following statement in bold is true - unless all objects die young before they make it to the tenured generation. Thanks goes to Vincent (see comments below).

Also, you can try to optimize your code. However, bear in mind that turning elegant code into disastrous chaotic madness to save precious milliseconds may not always prove worthwhile.

Scala

I played around a bit with my Scala program that approximates square roots. I managed to reduce the time it runs almost by two. These are the parameters I used:

set JAVA_OPTS=-XX:+PrintGCDetails -Xloggc:gc.log -Xmx16m -Xms16m -Xmn2m

Further increasing the size of the new generation (-Xmn) decreased the number of collections and therefore increased the application speed. But then your program needs more memory.

Decreasing the new generation size means less memory needed, but collections run a lot, your application is slower and throughput decreases.

That works here this way because in my application objects live a short life and then die quickly. If it was otherwise, decreasing the new generation size would push objects to the adult stage earlier and they would be checked for garbage collection less often.

Read on

- Improving Java Application Performance and Scalability by Reducing Garbage Collection Times and Sizing Memory by Nagendra Nagarajayya and J. Steven Mayer

- Tuning Garbage Collection with the 5.0 Java[tm] Virtual Machine

- List of JVM flags for GC tuning

- GC Portal by Alka Gupta

- Java Performance Tuning

- by buying this book after clicking this link you help me run this website

Download source code

PS. When investigating performance, you may also want to try out those commands:

-Xrunhprof (for methods profiling) -Xrunhprof:cpu=samples (similar but simpler) -Xprof (a heap/CPU profiling tool) -Xaprof (allocation profiling tool)

PPS. There are more graphical tools and some of them might be pluggable to your IDE. However, when using the command line you can manipulate GC parameters in an easier manner (but that's my opinion).

"the bigger the young generation, the less often minor collections happen - but the more often major collections will)"

ReplyDeleteI'm not sure this is true, I think it would depend on your average object lifetime.

If no object exists for more than a few milliseconds, why would there be more major collections? They should never make it to the old generation to begin with.

Hey Vincent, thanks for your comment.

ReplyDeleteWell, if an object lives a long life it will enter another generation (tenured). Then it is no longer subject to minor collections.

But you're right. If they all die young enough - then major collections will not run at all. I will update the article.

Best regards.

I think this is a very interesting subject to study, as more languages are created (and migrate) to the JVM, there may be vastly different amounts and types of garbage generated for each.

ReplyDeleteI wonder if there is an easy way to see the average object lifetime, and compare scala programs to java programs.

My thought is scala will generate more short term garbage, but I don't have any proof.

The G1 Collector has some interesting properties, and though it was originally intended as a 'concurrent' collector (which usually sacrifices overall througput), its certainly possible it could be even faster for scala like programs.

Vincent, to partially answer your question, you might be interested in seeing the output generated by your program when the JVM is given the hprof option.

ReplyDeletejava -Xrunhprof[:options] your_program

It will generate a report for heap usage. If you ask it to generate a binary report (Xrunhprof:format=b), you can analyze it with Eclipse Memory Analyzer.

I ran this for my Scala program and I guess the result was similar to the one you predicted. Quite a lot of Scala garbage, it even detected two potential memory leaks.

Perhaps you'd like to try out this program yourself. It has quite a few options and you might find what you are looking for.

http://www.eclipse.org/mat/

Good garbage optimization is better then no garbage optimization.

ReplyDeleteCan't argue on that one :)

Hi thanks for brief overview about the same. Enjoyed it. I am facing another issue. Its PermGen space after redeployment. a well known issue on the web forums. Can you please suggest me how to look for details on the same ? Or can you point to some place Where i can get a little more help ?

ReplyDeletejigarshah.net I am not sure mau be this can help you with PermGen problem, see http://www.tomcatexpert.com/blog/2010/04/06/tomcats-new-memory-leak-prevention-and-detection

ReplyDeleteThanks for all your replies.

ReplyDelete@jigarshah.net

You have these parameters you can pass on to the server:

-XX:PermSize=

-XX:MaxPermSize=

As far as I know, the server is responsible for this, it has a memory leak of some sort. I found more information here:

http://www.jroller.com/agileanswers/entry/preventing_java_s_java_lang

"The message is a symptom of an incomplete garbage collection sweep where resources are not properly released upon unload/restart. [...] the finger of blame has been pointed at CGLIB, Hibernate, Tomcat, and even Sun's JVM."

It depends on the scope and complexity of the project. However, as a general rule, we charge about £40-£60 for the labour involved +VAT and for the parts needed to complete the project.

ReplyDeletehave a peek at this web-site

In the end however the improvement of independent, underground water and sewage frameworks dispensed with open sewage trench and cesspools. Plumbing repair

ReplyDeleteThere are many plumbing services in Chicago the choice would limit to budget and locality although rates wouldn't vary that much you don't want to be fooled. Plumbing service

ReplyDeletea plumbing contractor, you must check whether he is licensed or not. Only the licensed persons are trustworthy and can easily do any critical plumbing task easily. Every house owner would like to get the best professional to take care of any emergency situations. plumbing tools

ReplyDeleteHence a specialized financing company that has experience in various types of equipment is required to seek garbage truck financing. garbage disposal reviews

ReplyDeleteWhenever you find leakage in your kitchen water pipe or a block in your basin, you must call the plumber for further assistance. Since, these tasks are quite difficult for a normal person to perform;professional contractors

ReplyDeleteGoing for a slightly more expensive but professional service might be advantageous when compared with opting for a service that is cheap, but unreliable. plumbers in kansas city mo

ReplyDeletePipes building depends on configuration, arranging, creation and usage. A pipes design draws up a pipe framework for another building or arrangement of structures, ensures each pipe association is strong and each conveyance technique is productive. Replace Polybutylene Pipes Florida

ReplyDeleteBeing a complex equipment, it need to be handled only by a knowledgeable and experienced professional. You should not attempt to repair the boiler yourself even if you are intimidated by the service/repair costs. heatcarenorwich.co.uk

ReplyDeleteBegin by making sure you will be covered financially for such an event. Many homeowner policies will not cover you for a flood of any kind. You may be required to carry additional flood water damage cleanup companies insurance to be covered.

ReplyDeleteOur certified technicians adhere to the professional standard of the Institute of Inspection, Cleaning and Restoration Certification (IICRC). Water Damage Restoration

ReplyDeletesmallest garbage disposal unit for deep sink are motorized appliances that turn on a flywheel to which impellers are loosely attached. Food waste inside the chamber is continuously hit and cut by rotating impellers, to grind it to smallest particles for flushing them out the drain pipe.

ReplyDeleteMost of the time I don’t make comments on websites, but I'd like to say that this article really forced me to do so. Really nice post! click here

ReplyDeleteOne present why galore businesses opt for postcards is because they are overmuch cheaper to be prefab and this can forbear a lot of expenses on the lengthened run. Tankless Lab

ReplyDeleteWaow this is quite pleasant article, my sister love to read such type of post, I am going to tell her and bookmarking this webpage. Thanks high pressure hose

ReplyDeleteers water heaters for both residential and commercial buildings as well as storage tanks. Keep your water fresh and clean with our water filtration and softeners. Our whole house filtration systems will keep all of the water in your home clean and pure. We also offer water softeners to prevent hard water issues, https://www.smartwheater.com

ReplyDeleteOne present why galore businesses opt for postcards is because they are overmuch cheaper to be prefab and this can forbear a lot of expenses on the lengthened run. Rheem RTEX-13

ReplyDeleteThat's a wonderful article, keep it up coming!

ReplyDeletefor more info

I really love the way you arranged every word accordingly which shows you don't have to be a poor home manager to write great articles. Also check out these garbage disposal systems

ReplyDeleteYou have done a great job. I will definitely dig it and personally recommend to my friends. I am confident they will be benefited from this site Gutters Raleigh

ReplyDeleteMost of the time I don’t make comments on websites, but I'd like to say that this article really forced me to do so. Really nice post! Gutter installation companies

ReplyDeletePretty good post. I just stumbled upon your blog and wanted to say that I have really enjoyed reading your blog posts. Any way I'll be subscribing to your feed and I hope you post again soon. Big thanks for the useful info Real Estate Blog

ReplyDeleteThis is very good blog thanks for sharing. best garbage disposals 2018

ReplyDeleteMuch appreciated such a great amount for this data. I need to tell you I agree on a few of the focuses you make here and others might require some further survey, however I can see your perspective. recommended you read

ReplyDeleteUseful and knowledgeable post ever, i like this one.Tanklessly

ReplyDeleteI for one like your post; you have shared great bits of knowledge and encounters. Keep it upGpsnest

ReplyDeleteThis is such a great resource that you are providing and you give it away for free. I love seeing blog that understand the value of providing a quality resource for free. flower shop in Jaipur

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteYour texts on this subject are correct, see how I wrote this site is really very good. custom patches

ReplyDeleteI read your post and got it quite informative. I couldn't find any knowledge on this matter prior to. I would like to thanks for sharing this article here. plumber surfers paradise

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteA very delightful article that you have shared here. Your blog is a valuable and engaging article for us, and also I will share it with my companions who need this info. Thankful to you for sharing an article like this. Gutter Installation Palatine

ReplyDeleteI read the above article and I got some knowledge from your article. It's actually great and useful data for us. Thanks for share it. home design services in Melbourne

ReplyDeleteHey friend, it is very well written article, thank you for the valuable and useful information you provide in this post. Keep up the good work! FYI, Pet Care adda

ReplyDeleteCredit card processing, wimpy kid books free

,Essay About Trip With Family

What is your favorite type of workout?# BOOST Your GOOGLE RANKING.It’s Your Time To Be On #1st Page Our Motive is not just to create links but to get them indexed as will Increase Domain Authority (DA).We’re on a mission to increase DA PA of your domain High Quality Backlink Building Service Boost DA upto 15+ at cheapest Boost DA upto 25+ at cheapest Boost DA upto 35+ at cheapest Boost DA upto 45+ at cheapest

ReplyDeleteIt is what I was searching for is really informative. It is a significant and useful article for us. Thankful to you for sharing an article like this.Leaking Toilets Western Australia

ReplyDeleteI read your post and got it quite informative. I couldn't find any knowledge on this matter prior to. I would like to thanks for sharing this article here.일산노블홈타이

ReplyDelete파주노블홈타이

평택노블홈타이

화성노블홈타이

의정부노블홈타이

동해노블홈타이

삼척노블홈타이

Find the best Fixed mortgage rate in Guelph that work perfectly for you. We make it easy to compare rates in Guelph big banks and top brokers for free. Best mortgage rate in Guelph

ReplyDeleteFind the best Fixed mortgage rate in Baltimore that work perfectly for you. We make it easy to compare rates in Baltimore big banks and top brokers for free. Best mortgage rate in Baltimore

ReplyDeleteFacing a reckless driving charge virginia? Understanding the implications is crucial; consult with a knowledgeable attorney to navigate the legal process and protect your rights effectively.

ReplyDeleteThis post is like a breath of fresh air. Thank you for spreading joy and optimism! Fortnite Unblocked lets you enjoy the game without any hassle. Found some useful insights! Play now and experience the convenience!

ReplyDeleteThis blog is really very much informative thank you for providing such a good blog.

ReplyDeletefor ISO certification contact us.

ISO Certification in Saudi Arabia

Thanks for the detailed insights on Scala's performance and garbage collection. It's interesting to see how GC tuning can significantly impact application efficiency. Your explanations make a complex topic more approachable.

ReplyDeleteFor ISO Certification In Saudi Arabia Contact us.

The article offers a comprehensive guide to analyzing garbage collection (GC) performance in Scala on the JVM. It provides clear instructions for enabling GC logging, provides data breakdowns, and recommends tools like GC Viewer for visualizing and analyzing logs. However, it could benefit from more detailed tuning advice and addressing potential pitfalls, and could be improved by linking to resources and discussing specific JVM tuning parameters. Immigration Lawyer Colombia lawyers may represent individuals, companies, or institutions in disputes over issues like contracts, property, or family matters.

ReplyDelete